In a core-for-core comparison Peddibhotla estimates Genoa outperforms Intel's Ice Lake generation by closer to 50 percent in SPECrate 2017's integer benchmark and 78-96 percent in the floating point benchmark.Īnd while Intel has held an advantage over AMD in workloads that rely on the AVX-512 instruction set for things like deep learning and AI inferencing.

Of course that's with more than twice the cores per socket. Pointing to Intel's 40-core Xeon Platinum 8380 CPUs - for the moment the chipmaker's fastest available - AMD claims Genoa is anywhere from 2.5x to 3x faster in the popular SPECrate 2017 floating point and integer benchmarks respectively. Unfortunately repeated delays to the CPU have put it, and Argonne National Laboratory's Aurora Supercomputer, woefully behind schedule.Īs of the latest delay earlier this month, Intel expects the first volume shipments of the chip to hit the market in Q1 2023.Īnd of course, AMD didn't miss the opportunity to capitalize on Intel's struggle bringing the chip to market.

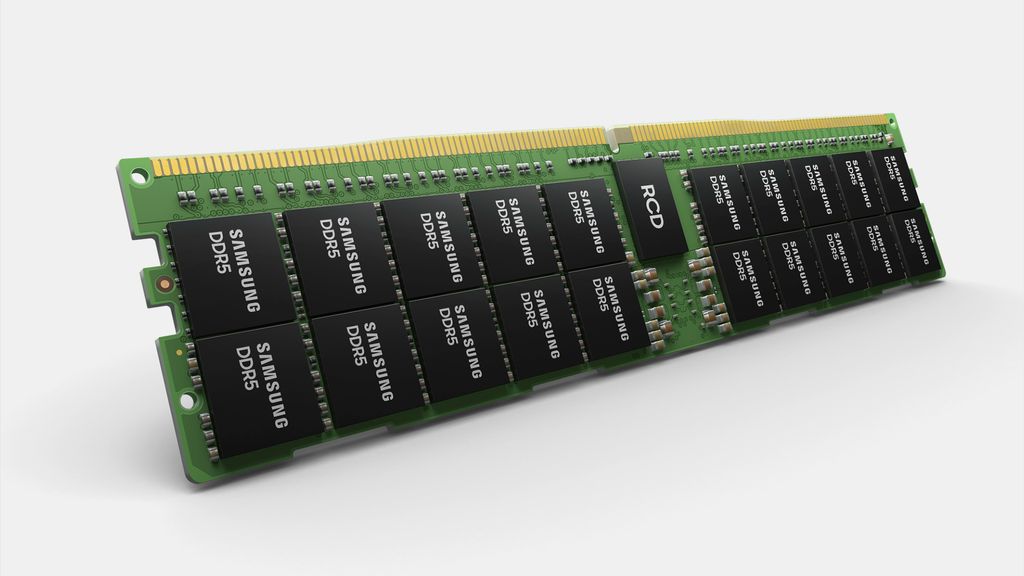

Intel had hoped to beat AMD to market with its 4th-Gen Xeon Scalable processor, codenamed Sapphire Rapids, by more than a year. While AMD may have a head start on CXL, PCIe 5.0, and DDR5 in the datacenter, it won't be long before Intel brings its Xeon processors back into feature parity. While some vendors like Samsung and Astera labs have announced CXL memory modules, the standard is still in its infancy.Īnd those hoping to take advantage of more advanced CXL accelerators will have to wait until AMD ships a CPU that fully supports the CXL 2.0 spec required for technologies like memory pooling. And AMD clearly expects CXL will be a hit in the datacenter as it's already extended its memory encryption tech used in confidential computing - called SEV-SNP - for these memory expansion modules out of the box.Įven though AMD supports CXL, that doesn't mean the ecosystem is necessarily ready to take advantage the new tech. Genoa supports a modified version of CXL 1.1 that backports support for tier-memory configurations. This is where AMD is focusing its attention for its first foray into CXL. While future iterations of CXL will enable full composable infrastructure, early implementations of the tech are focused squarely on memory expansion. Speaking of CXL, Genoa is the first x86 platform with support for the cache-coherent interface. AMD's Epyc 4 will likely beat Intel Sapphire Rapids to market.After spate of delays, Intel promises Sapphire Rapids Xeons for early 2023.AMD refreshes desktop CPUs with 5nm Ryzen 7000s that can reach 5.7GHz with 16 cores.Intel takes on AMD and Nvidia with mad 'Max' chips for HPC.Of the PCIe lanes 32 can be dedicated for SATA connectivity, while dual socket systems gain an additional 12 "bonus" lanes of PCIe 3.0 connectivity. Genoa also increases the number of interfaces to 160 lanes of PCIe 5.0 and adds 64 lanes dedicated to CXL. Of course, even at one DIMM per channel populating all those channel could prove tricky, especially in traditional dual socket systems. According to AMD, this works out to a maximum theoretical memory bandwidth of 460GB/sec when all 12 channels are populated with 4,800MT/sec DDR5 memory. Genoa's I/O die - which is now based on a TSMC 6nm process as opposed GlobalFoundry's 14nm tech - supports 12 channels of DDR5 4,800 MT/sec up to 6TB per socket. The CPUs are AMD's first datacenter chips with support for DDR5 memory. Looking beyond raw performance Epyc 4 also delivers a number of memory and I/O improvements over Milan. While AMD will tell you Epyc 4 squeezes more work out of each watt, that doesn't change the fact that the higher power envelope poses a challenge for server builders tasked with finding a way to dissipate all of that heat, and the datacenter operators that have to power those systems. What is new is the move from TSMC's 7nm to its more advanced 5nm process and the use AMD's Zen 4 cores, which doubles the L2 cache to 1MB per core. The actual core layout of these chiplets, however, remains largely unchanged from Milan, with eight cores sharing 32MB of 元 cache between them. The larger package makes room for four additional Core Complex Dies (CCDs), bringing the total to 12. Taking a peek under Genoa's now even larger heat spreader - yep, there's a new socket too - reveals just how AMD has managed to cram so many cores into a single package. As usual we recommend taking these claims with a healthy grain of salt. Combined with higher core counts and clock speeds, Ram Peddibhotla, AMD VP of Epyc product management, claims its flagship 96 core Epyc 4 CPUs are twice as fast as last year's 64-core Milan parts in a variety of cloud, high-performance compute (HPC), and enterprise benchmarks. However, the IPC gains are only part of the story.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed